The Challenge

Real-Time Visuals for Live Performance

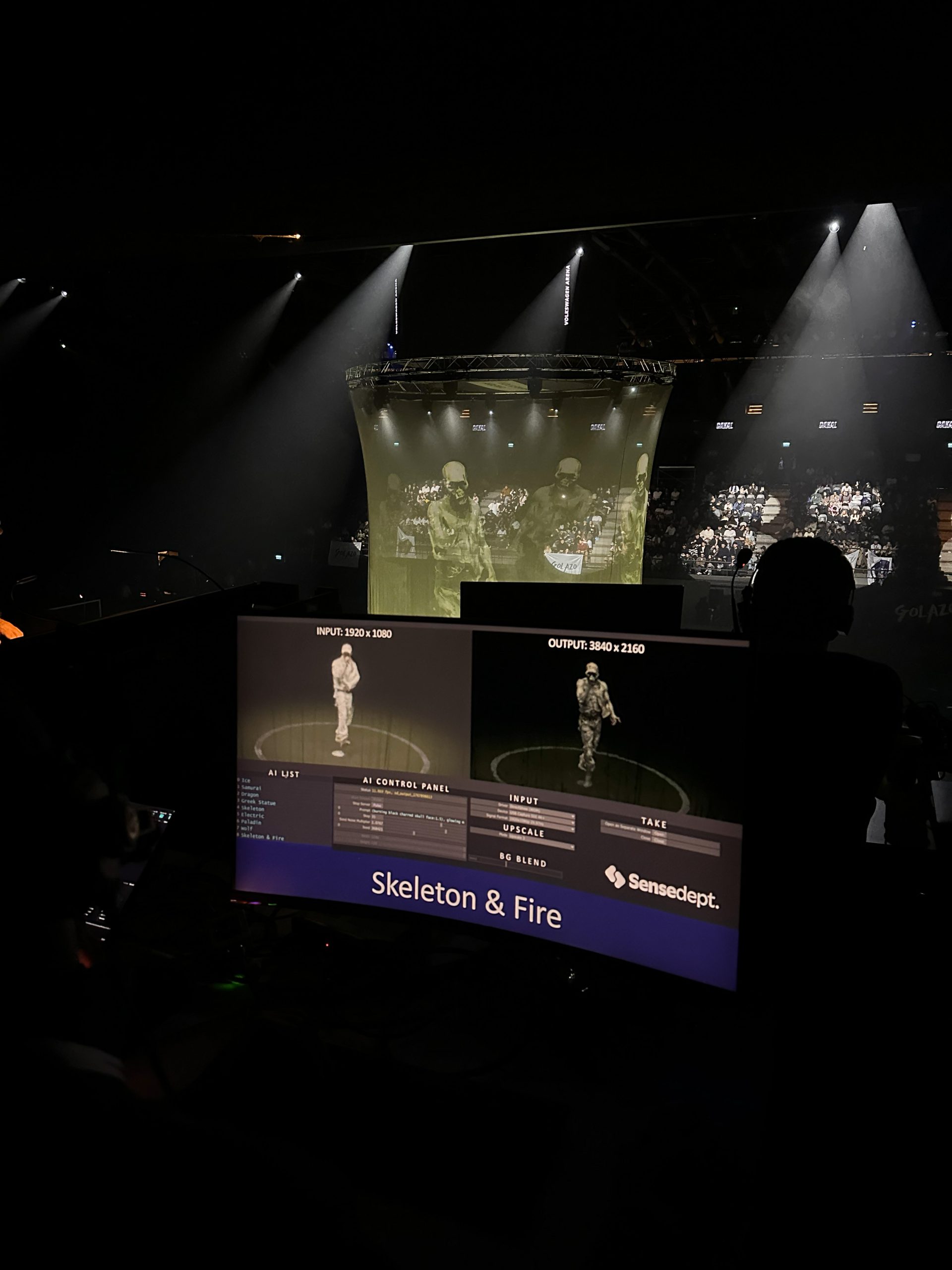

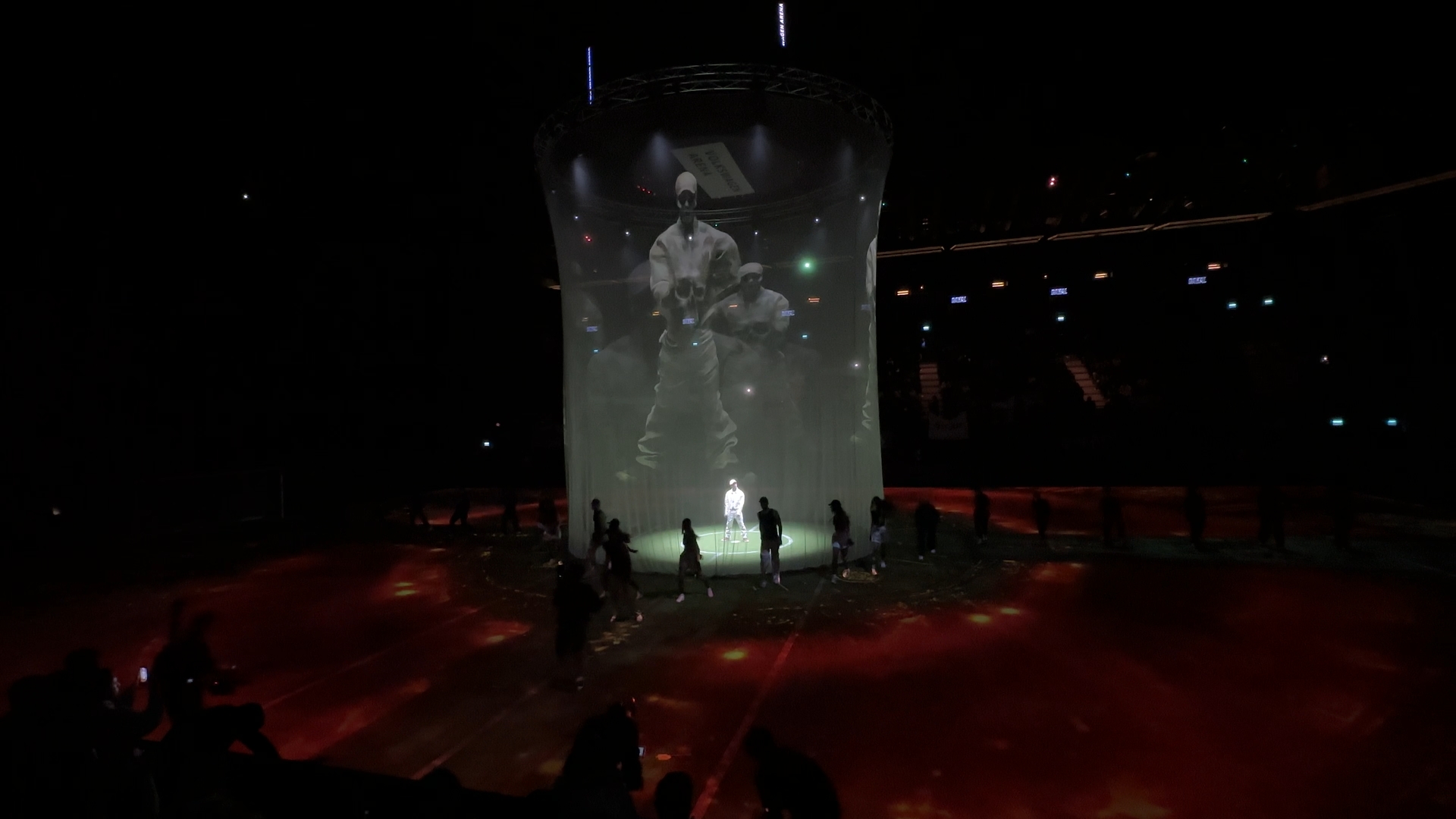

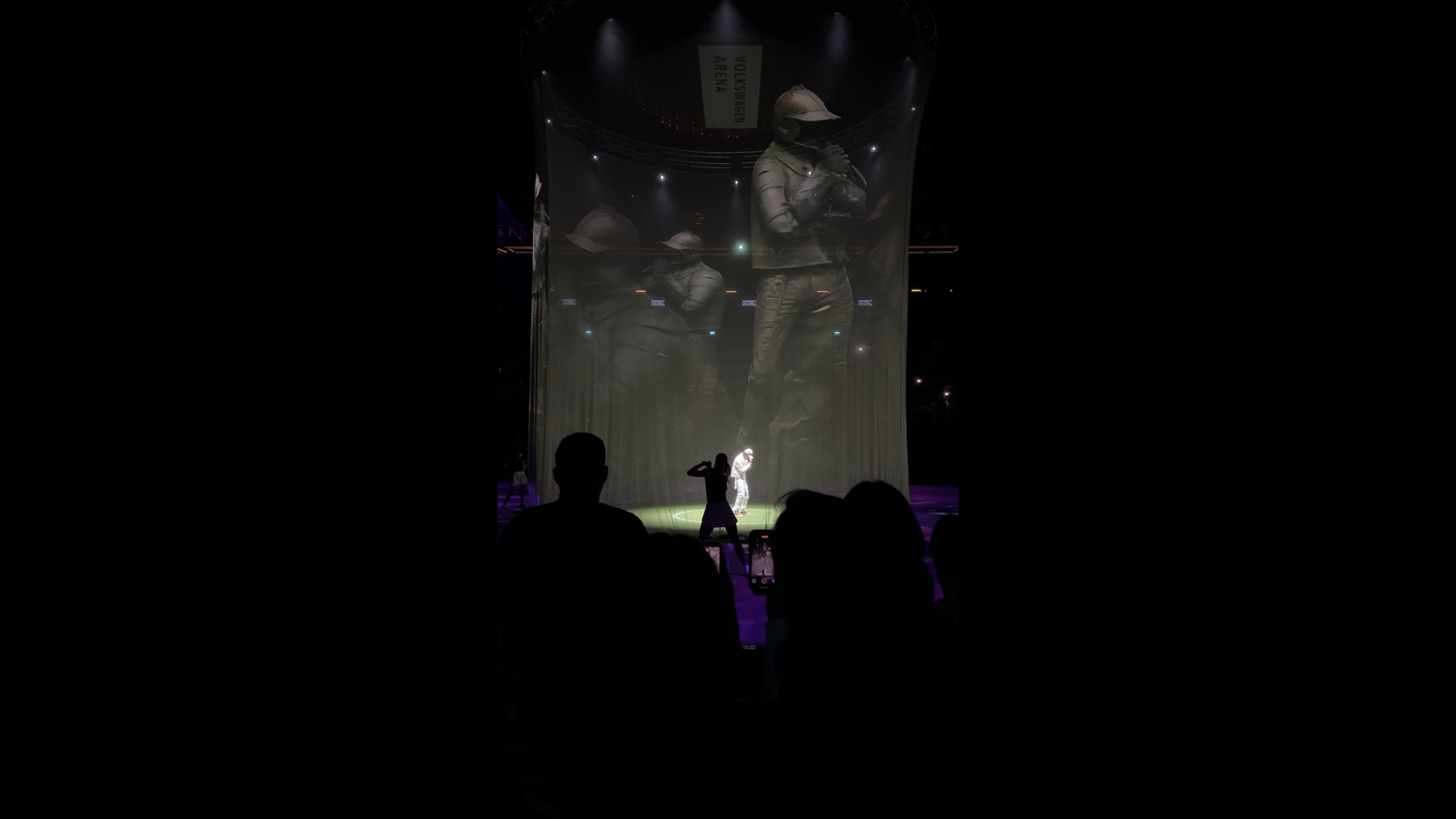

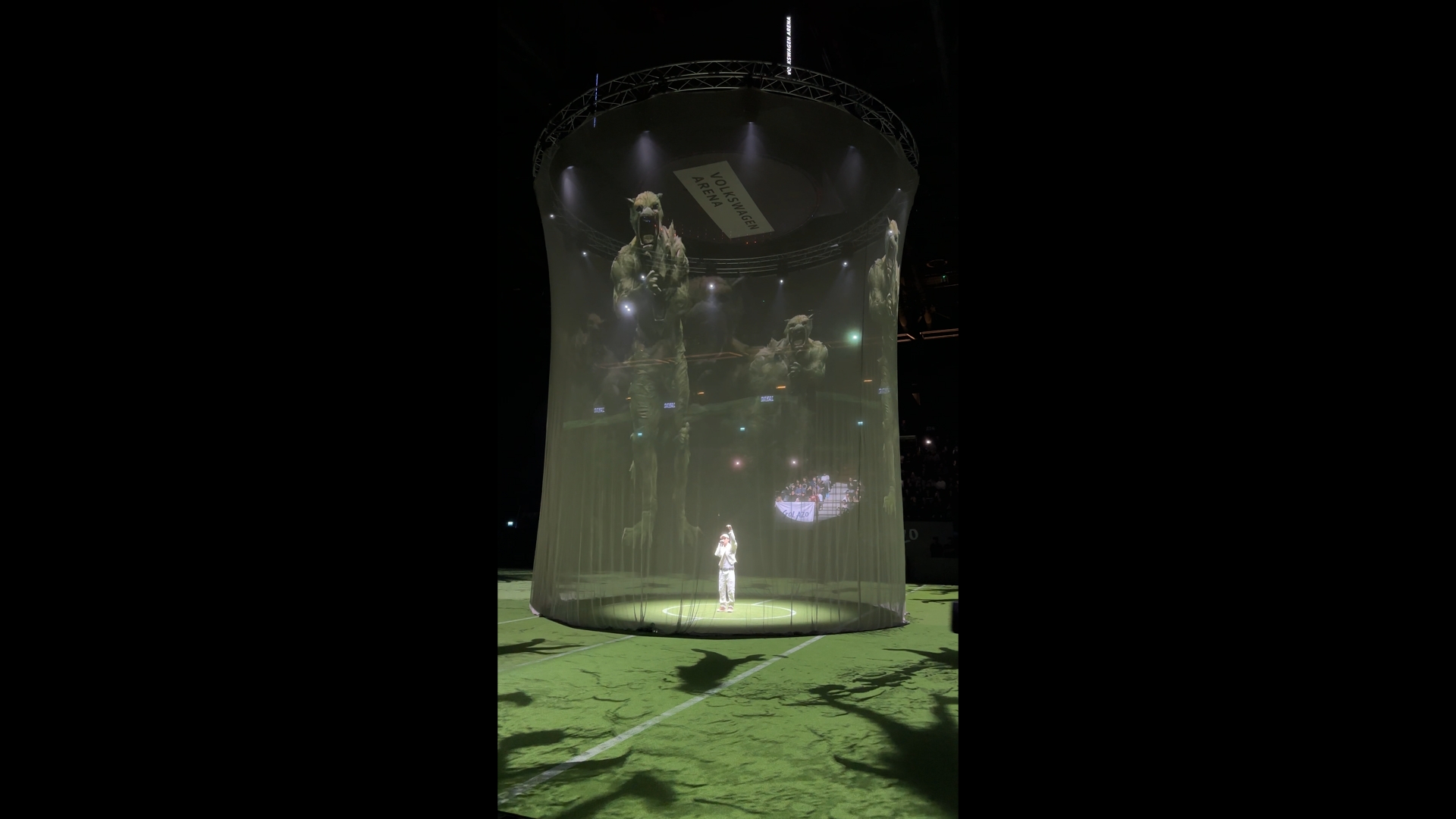

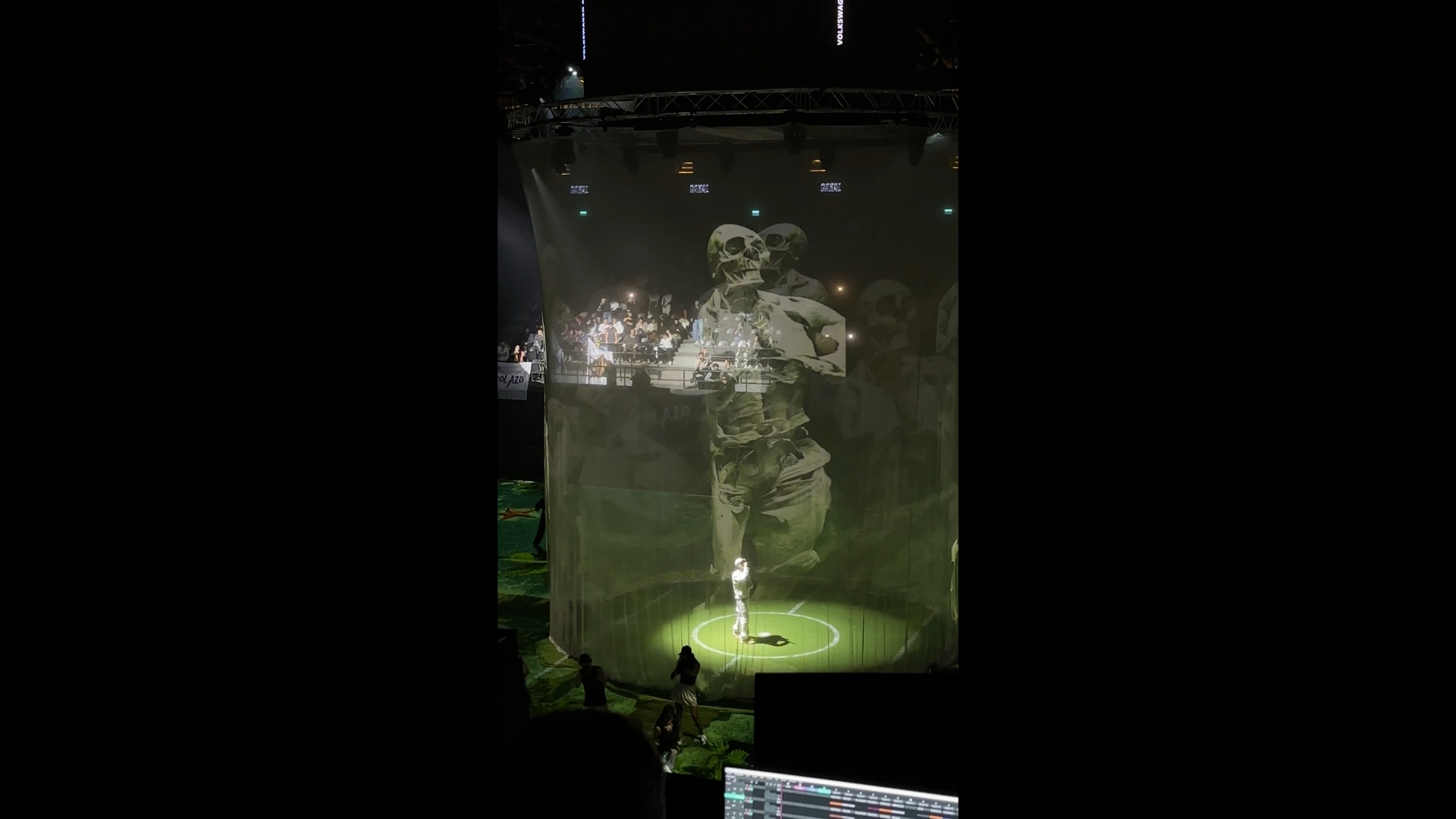

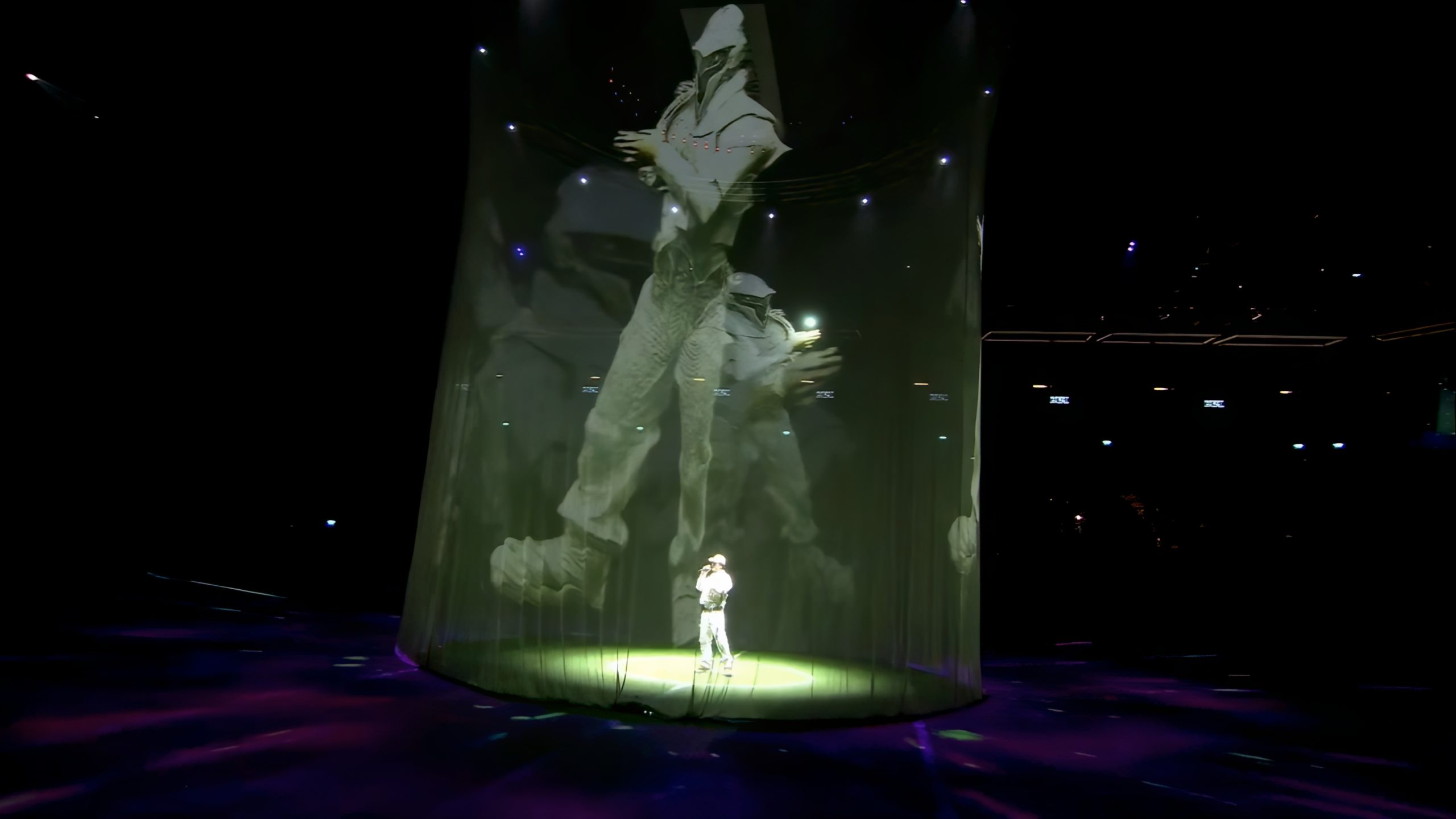

This project for one of Turkey’s most popular rap artists, Emirhan Çakal (Çakal), focused on building a real-time visual system that places live camera imagery at the core of the concert experience. The goal was to move beyond the conventional use of live feeds and transform them into an integral part of the performance narrative.

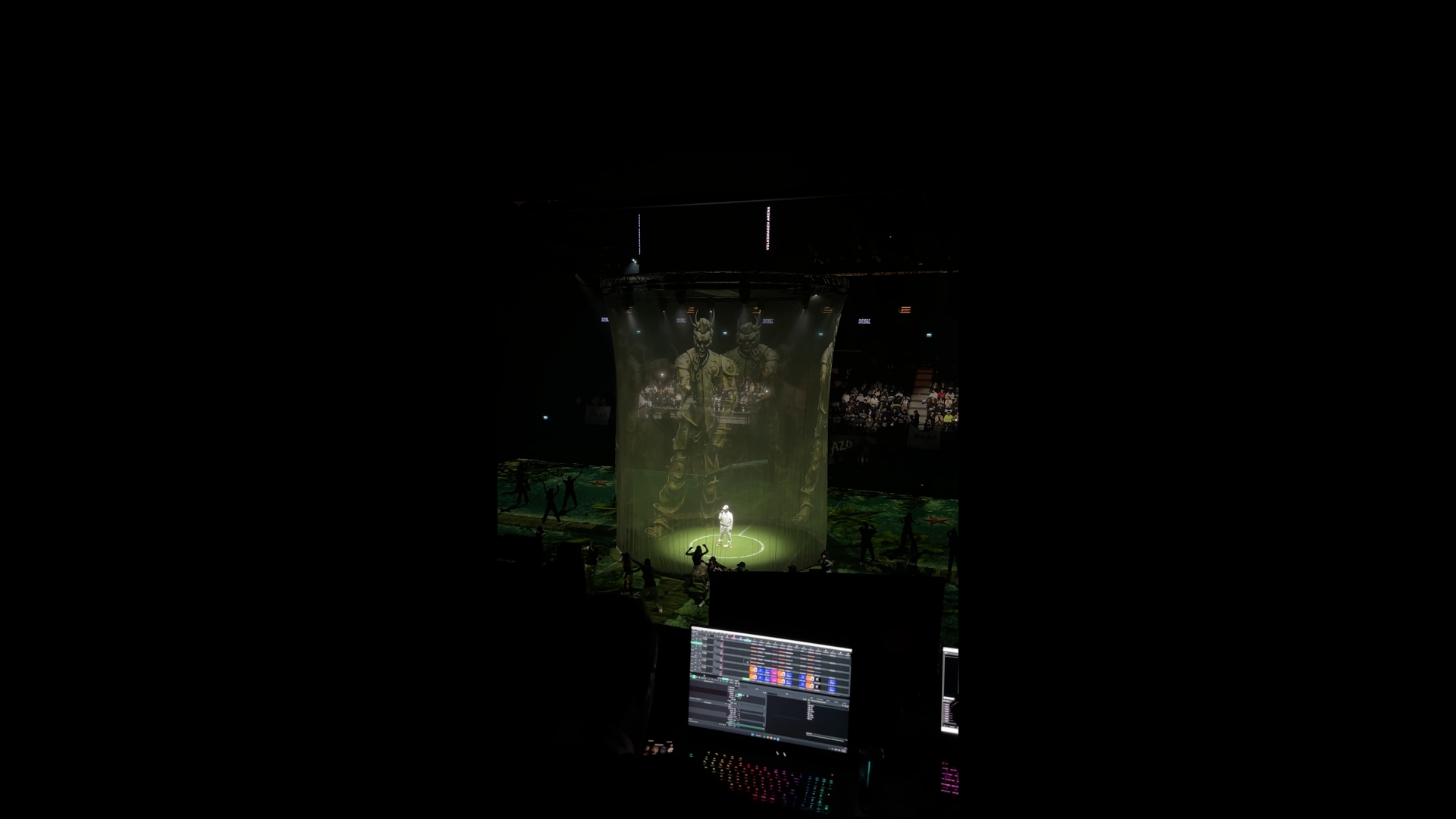

Live camera feeds were routed simultaneously into TouchDesigner and Unreal Engine. The system was designed to operate fully in real time. Using Kinect-like sensors and tracking devices, movement, silhouette, and body data were captured and analyzed instantly, becoming the primary input for the visual effects pipeline.

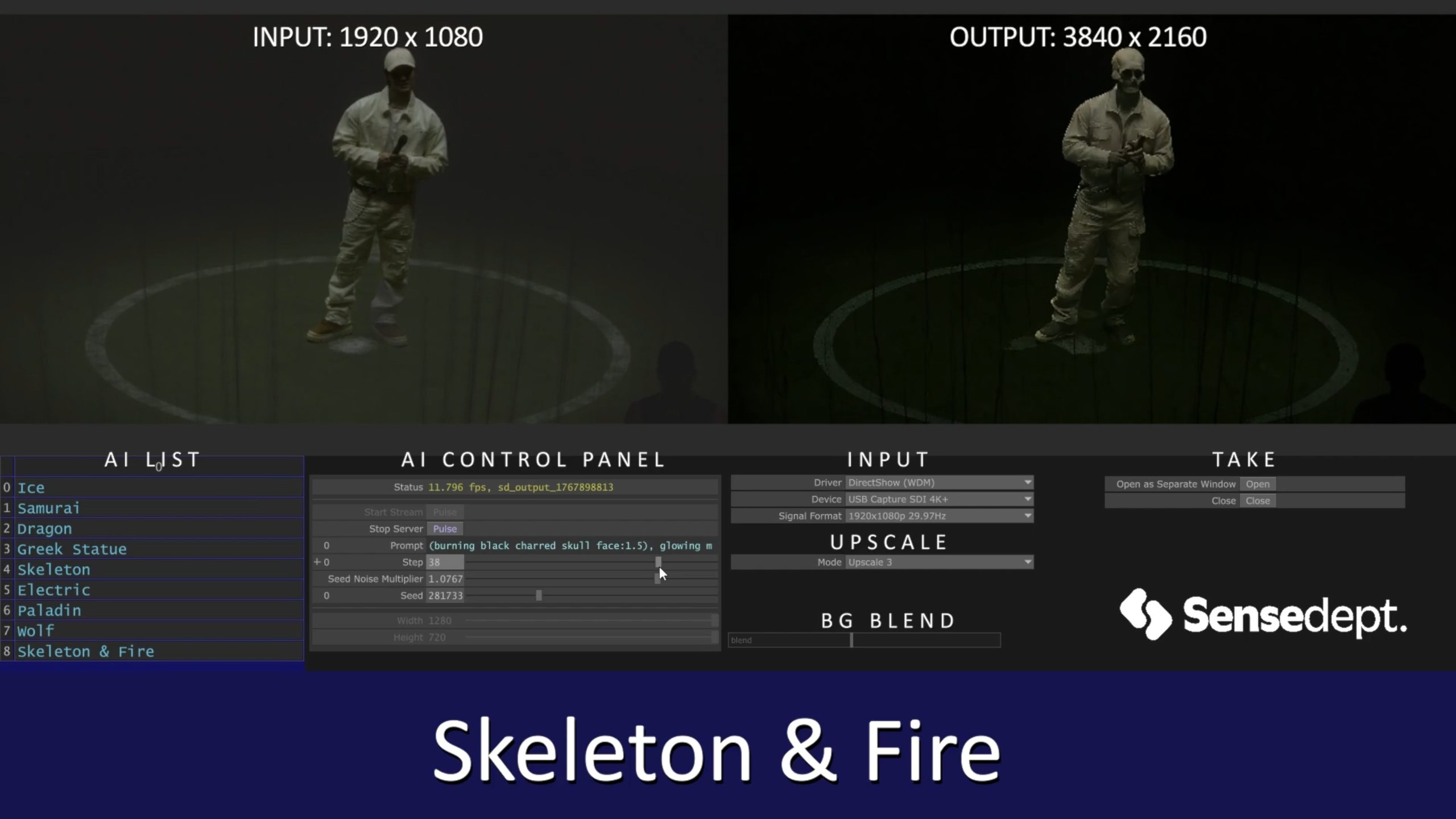

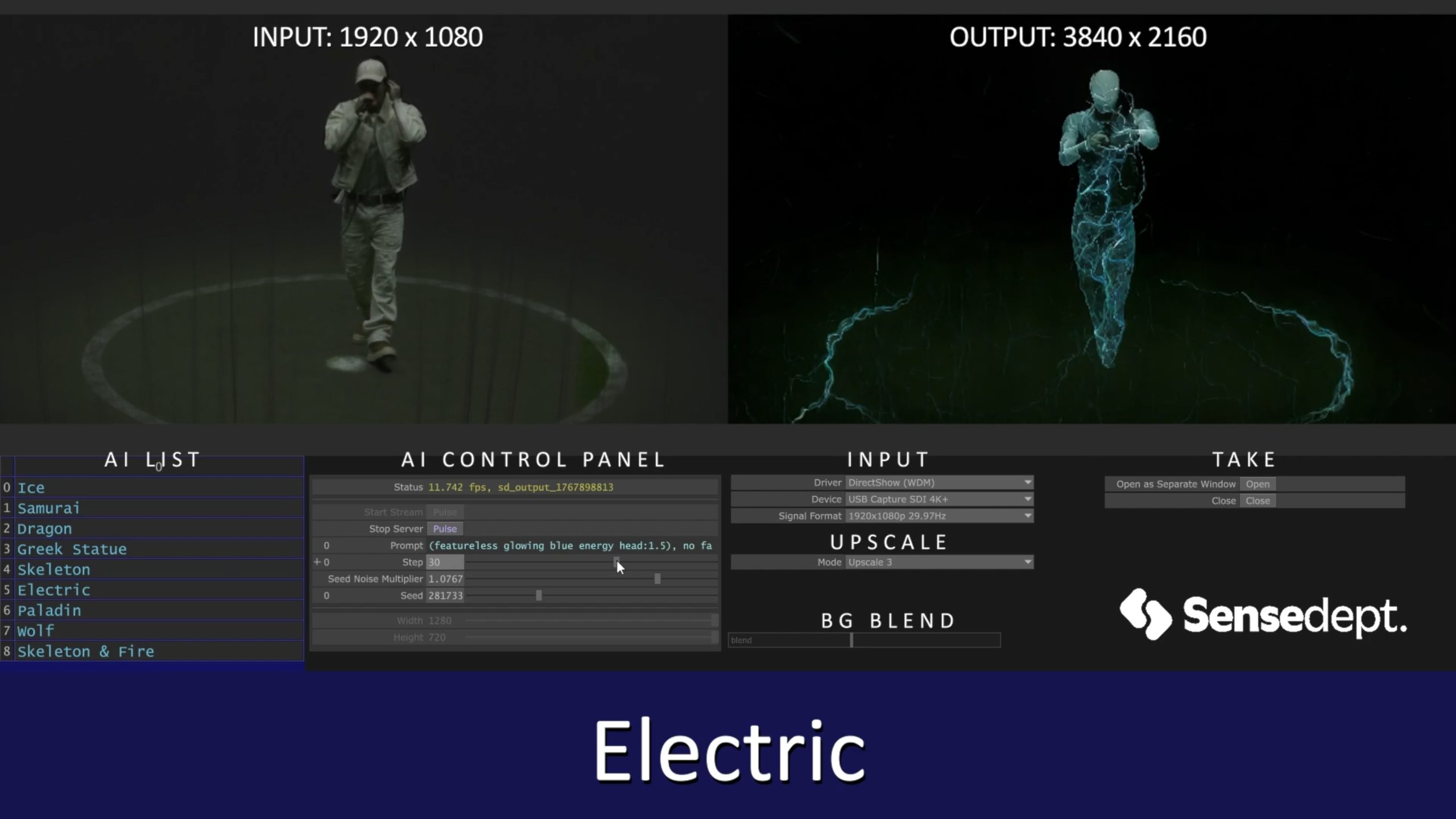

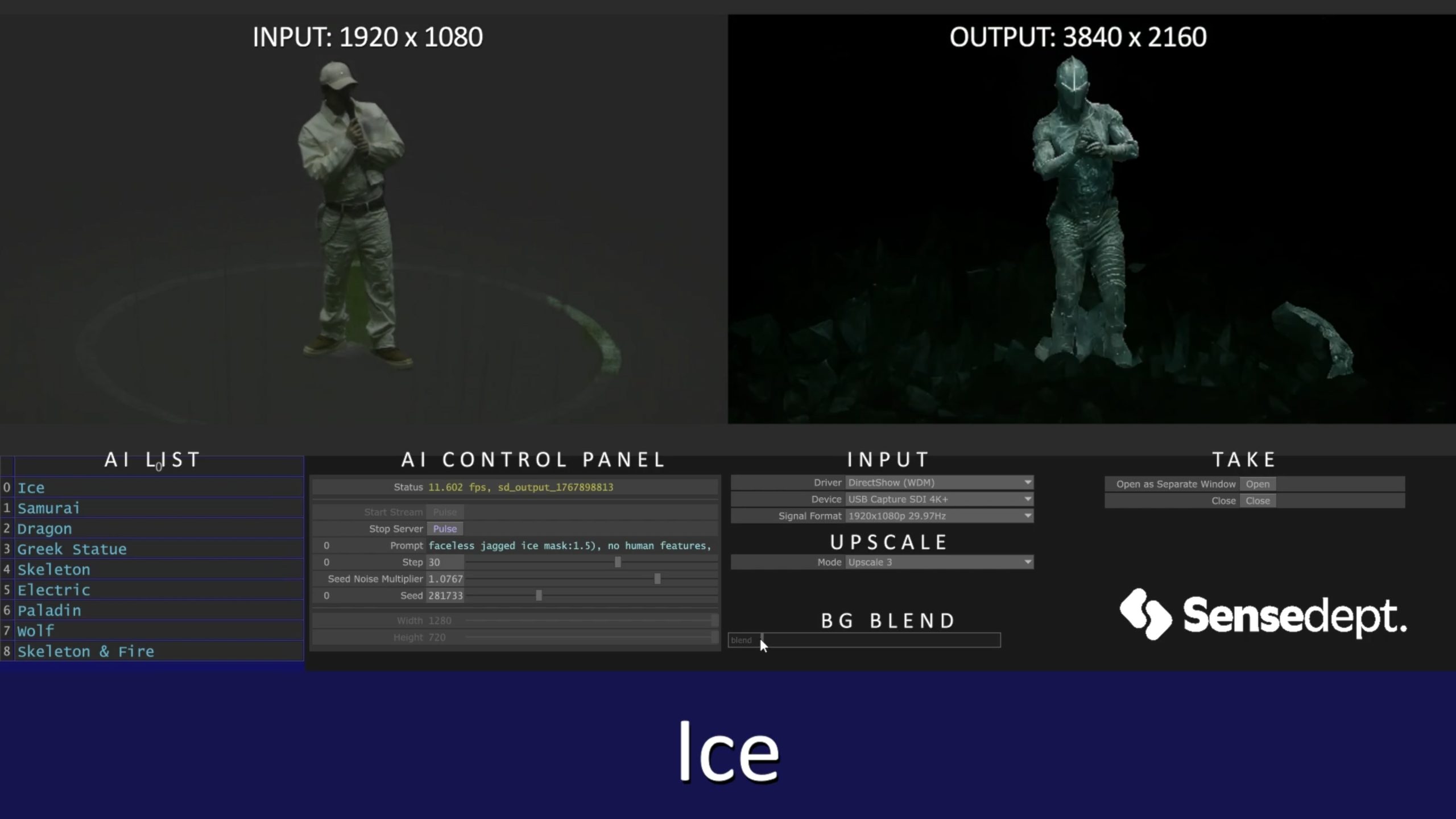

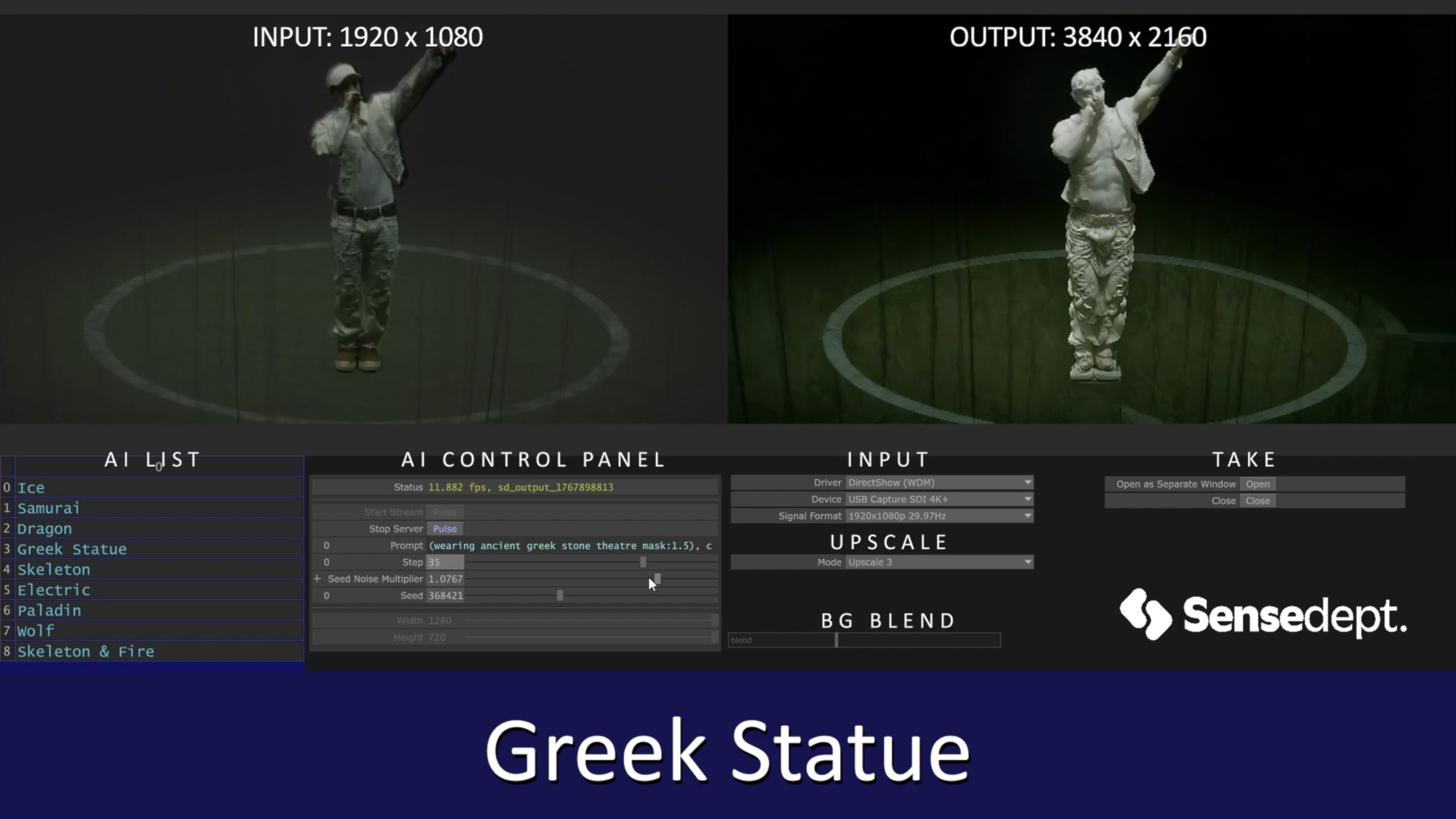

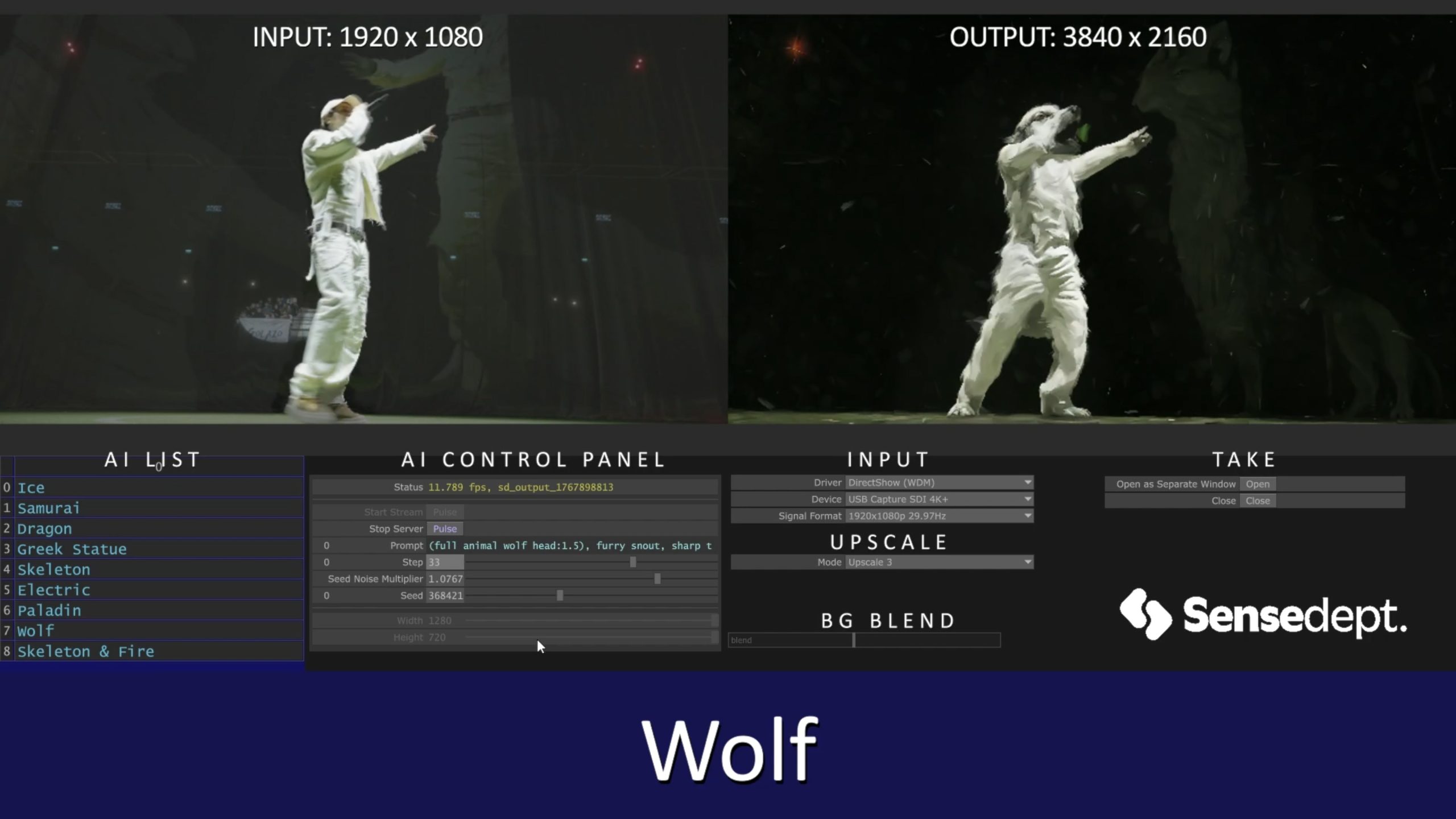

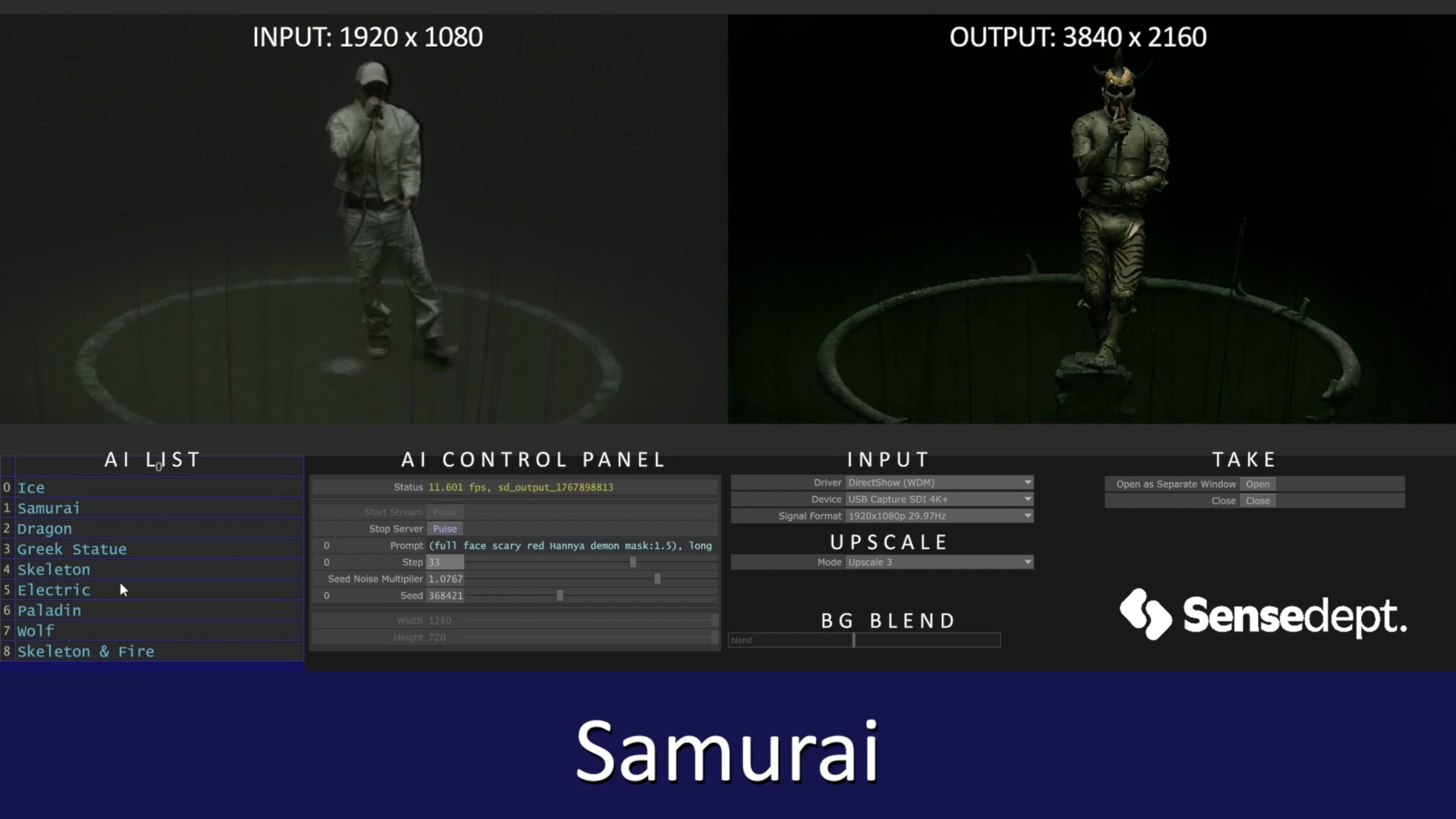

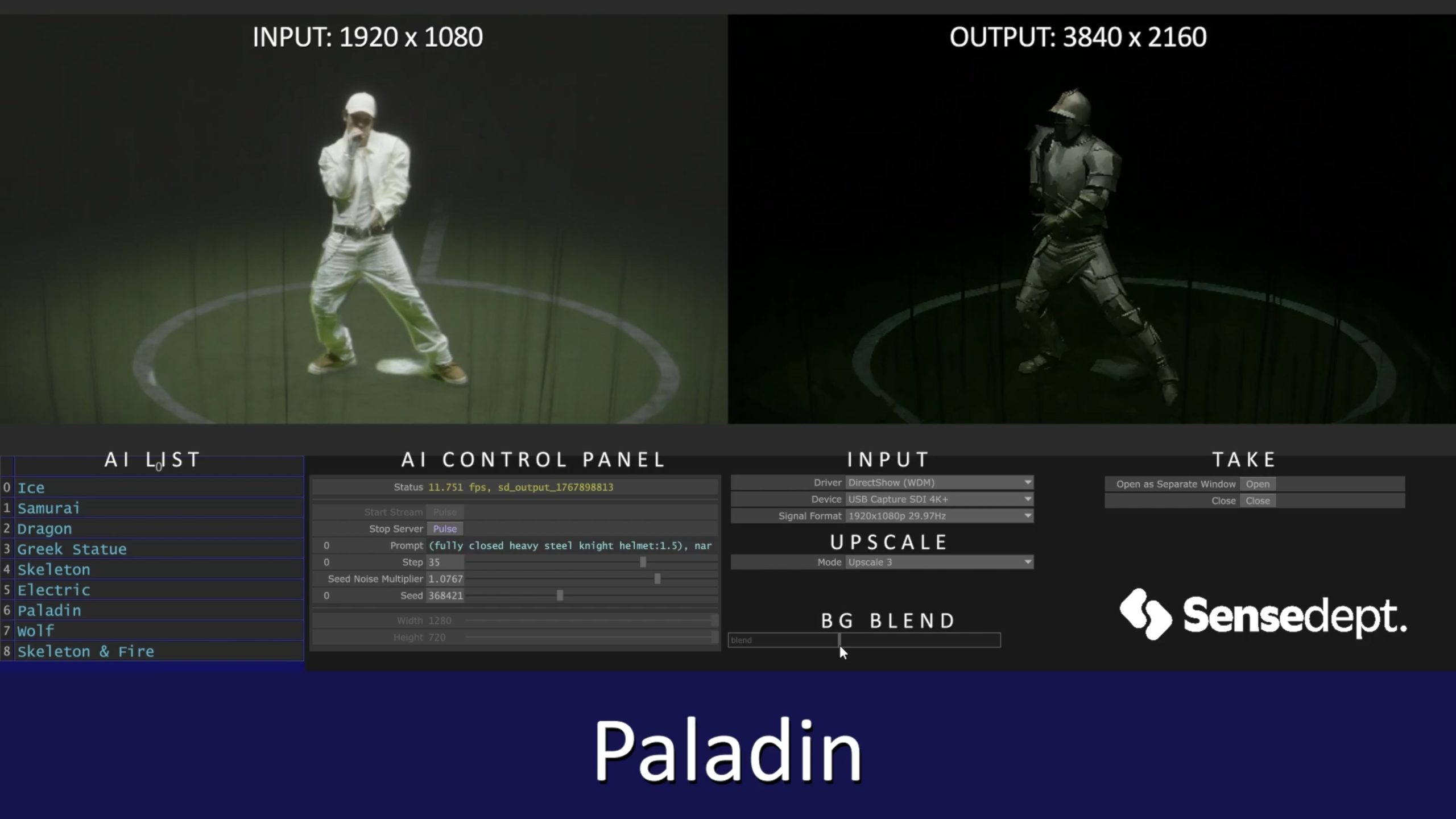

Within TouchDesigner, AI-driven tools and generative systems were used to transform the artist into different visual characters during the performance. Forms such as a knight, werewolf, skeleton, electric figure, and ice figure were generated live and displayed on stage screens without interrupting the flow of the concert or introducing latency.

The digital environment built in Unreal Engine was tightly integrated with the data and effect streams coming from TouchDesigner, strengthening the overall visual atmosphere of the stage. In this setup, camera imagery evolved from a processed visual into an active element that carried and shaped the stage narrative.

The final outcome presents a cohesive live performance experience, demonstrating how concert camera feeds can be reinterpreted through sensor data, artificial intelligence, and real-time visual systems.

Equipment

The visual system for this project was designed to combine live camera feeds, sensor data, and real-time effect generation within a single pipeline. TouchDesigner and Unreal Engine formed the core of the system, running on NVIDIA RTX 5090 GPUs to ensure low latency and stable real-time performance. This setup enabled seamless integration between live visuals and the digital stage environment.

To capture movement and body data in real time, Kinect sensors and tracking systems were used. The captured data directly drove the visual transformations displayed on stage during the performance.

Credits

Resolume Operator: Atakan Dilsiz

Artist Management: Berkay Cosgun

Stage Manager: Emre Yolgiden

Sensor & Real-Time System Setup: Mevlüt Uslu

Real-Time Content Operator: Mevlüt Uslu

Real-Time Content Design & Production: Sense Department